This summer, I spent an evening reading GPT-3 demos on Twitter. Poetry, code, essays, business emails, all generated by a machine. Some of it was mediocre. Some of it was genuinely hard to distinguish from human writing. I kept scrolling, half fascinated and half unsettled, because for the first time it didn't feel like a party trick. It felt like a preview.

2020 has been a brutal year in most respects. But for artificial intelligence and machine learning, it's been one of the most consequential years on record. Not because of one breakthrough, but because several converged at once, and the world was paying attention.

GPT-3: when language AI became a product

In May, OpenAI published the paper describing GPT-3: 175 billion parameters, over 100 times larger than its predecessor. The model could write essays, generate code, translate languages, and answer questions, often with just a few examples to go on. That capability, called few-shot learning, was the real breakthrough. You didn't need to retrain the model for every new task. You just showed it what you wanted.

But what made GPT-3 different from previous research milestones wasn't just the model. It was the distribution. OpenAI didn't release the weights for anyone to download. They built an API. You pay for access. Microsoft licensed it exclusively in September. Within months, developers were building everything from copywriting tools to code generators on top of it. Language AI went from research artifact to commercial product in a single year.

Beyond language: transformers go everywhere

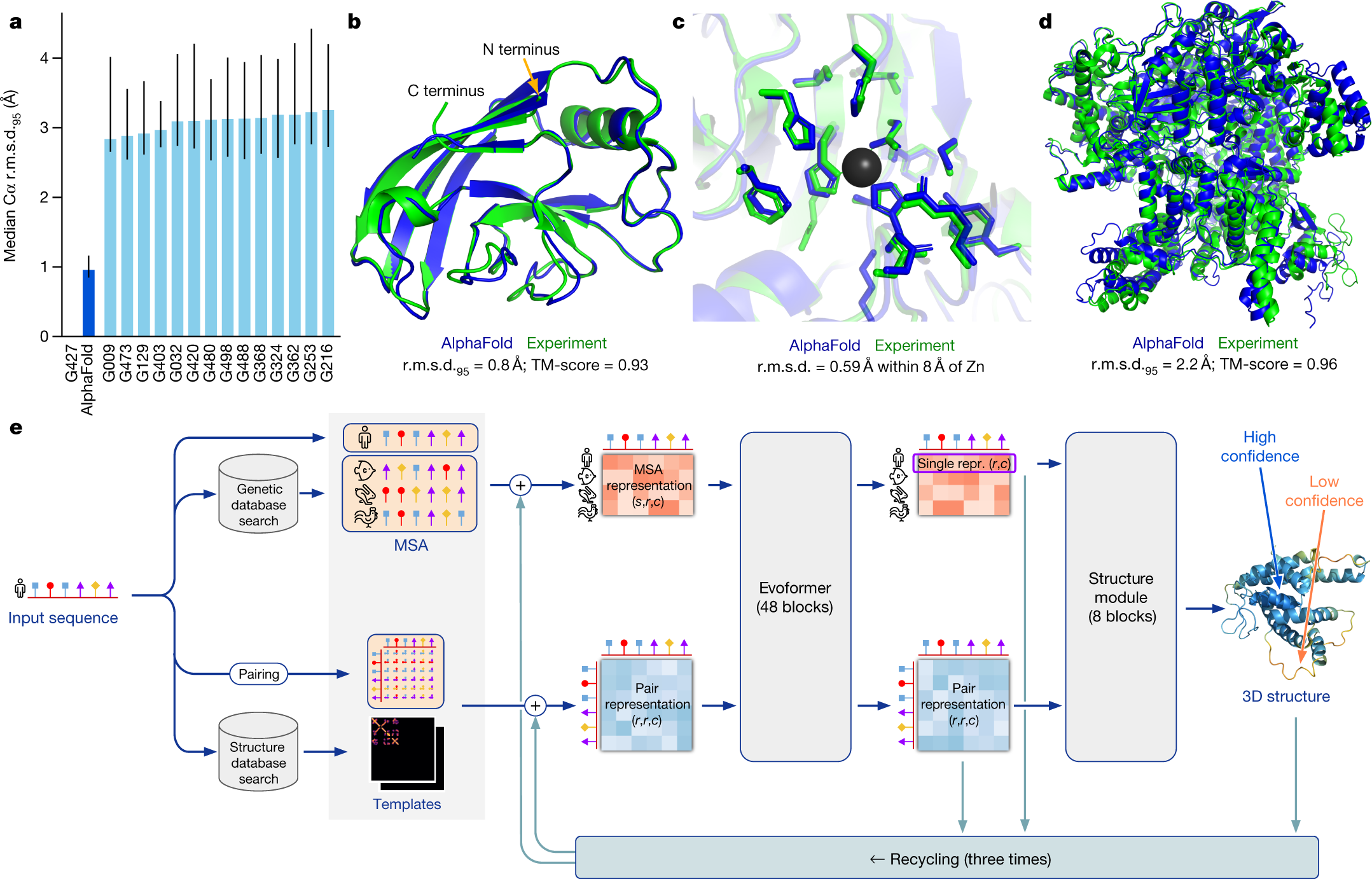

GPT-3 grabbed the headlines, but the quieter story of 2020 is that the transformer architecture broke out of NLP entirely. Researchers applied transformers to computer vision, protein sequence modeling, and object detection. DeepMind's AlphaFold solved protein structure prediction, a problem that had frustrated biologists for 50 years, achieving accuracy that rivaled experimental methods.

Meanwhile, model distillation made it possible to shrink large models without significant performance loss, bringing powerful AI closer to practical deployment. The pattern across all of these developments is the same: techniques that were state-of-the-art in one domain are becoming building blocks across many.

AI meets education's crisis

While the research community was pushing models forward, the world was running a forced experiment in remote learning. 1.5 billion students, 91.3% of global enrollments, were disrupted by school closures. Coursera saw roughly a 450% increase in enrollments between March and June compared to the prior year. The demand for online education didn't just spike; it overwhelmed the infrastructure.

A friend who teaches high school told me in April that she was spending more time troubleshooting tech issues than teaching. "The kids who were already self-directed are fine," she said. "Everyone else is falling through the cracks." That gap, between students who can navigate self-paced digital learning and those who need more support, is exactly where AI has the most potential and the most distance still to cover. Adaptive learning systems, intelligent tutoring, automated feedback: the building blocks exist, but 2020 made it painfully clear how far we are from deploying them at the scale the world actually needs.

What this year meant

2020 was the year AI stopped being a future promise and became a present reality. The models got dramatically bigger and more capable. The applications moved from demos to commercial products. And a global crisis revealed both the enormous potential of intelligent systems and the gap between what's possible in a lab and what's available in a classroom.

The question going into 2021 isn't whether AI is ready. It's whether we are.